一 介绍 Scrapy一个开源和协作的框架,其最初是为了页面抓取 (更确切来说, 网络抓取 )所设计的,使用它可以以快速、简单、可扩展的方式从网站中提取所需的数据。但目前Scrapy的用途十分广泛,可用于如数据挖掘、监测和自动化测试等领域,也可以应用在获取API所返回的数据(例如 Amazon Associates Web Services ) 或者通用的网络爬虫。

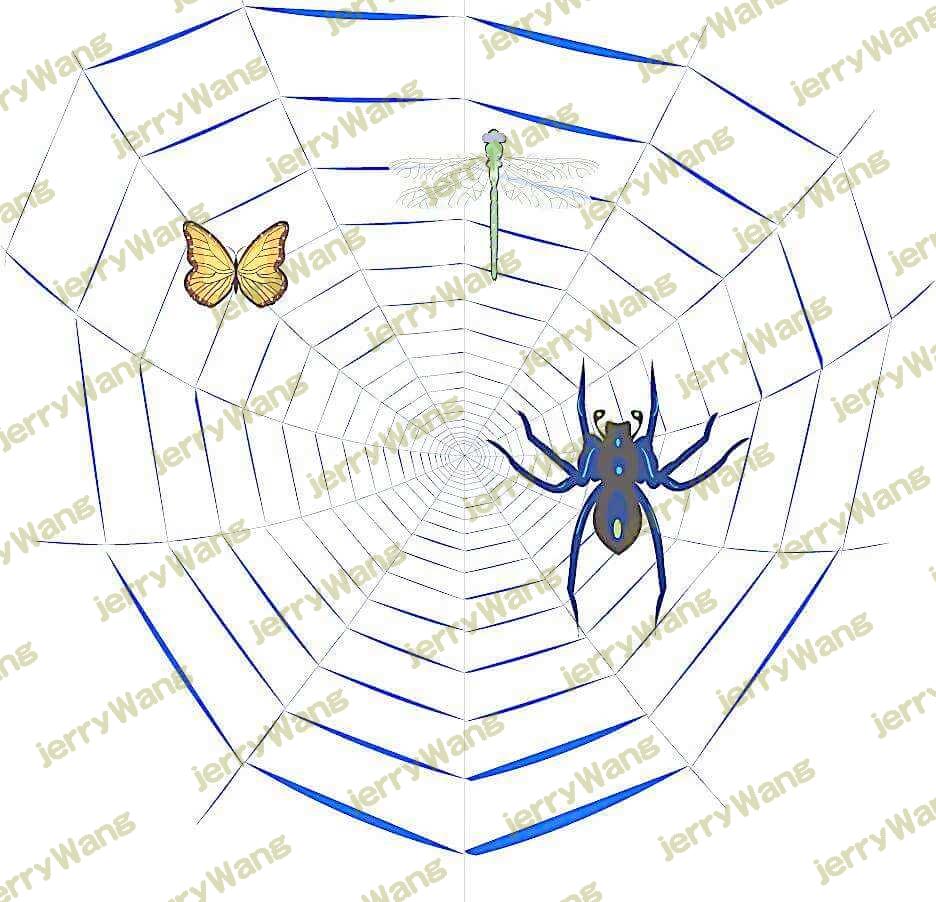

Scrapy 是基于twisted框架开发而来,twisted是一个流行的事件驱动的python网络框架。因此Scrapy使用了一种非阻塞(又名异步)的代码来实现并发。整体架构大致如下

The data flow in Scrapy is controlled by the execution engine, and goes like this:

The Engine gets the initial Requests to crawl from the Spider . The Engine schedules the Requests in the Scheduler and asks for the next Requests to crawl. The Scheduler returns the next Requests to the Engine . The Engine sends the Requests to the Downloader , passing through the Downloader Middlewares (see process_request() Once the page finishes downloading the Downloader generates a Response (with that page) and sends it to the Engine, passing through the Downloader Middlewares (see process_response() The Engine receives the Response from the Downloader and sends it to the Spider for processing, passing through the Spider Middleware (see process_spider_input() The Spider processes the Response and returns scraped items and new Requests (to follow) to the Engine , passing through the Spider Middleware (see process_spider_output() The Engine sends processed items to Item Pipelines , then send processed Requests to the Scheduler and asks for possible next Requests to crawl. The process repeats (from step 1) until there are no more requests from the Scheduler . Components:

引擎(EGINE)

引擎负责控制系统所有组件之间的数据流,并在某些动作发生时触发事件。有关详细信息,请参见上面的数据流部分。

调度器(SCHEDULER)

下载器(DOWLOADER)

爬虫(SPIDERS)

项目管道(ITEM PIPLINES)

下载器中间件(Downloader Middlewares)

位于Scrapy引擎和下载器之间,主要用来处理从EGINE传到DOWLOADER的请求request,已经从DOWNLOADER传到EGINE的响应response,你可用该中间件做以下几件事

process a request just before it is sent to the Downloader (i.e. right before Scrapy sends the request to the website); change received response before passing it to a spider; send a new Request instead of passing received response to a spider; pass response to a spider without fetching a web page; silently drop some requests. 爬虫中间件(Spider Middlewares)

官网链接:https://docs.scrapy.org/en/latest/topics/architecture.html

二 安装 1 2 3 4 5 6 7 8 9 10 11 1 、pip3 install wheel 3 、pip3 install lxml 4 、pip3 install pyopenssl 5 、下载并安装pywin32:https://sourceforge.net/projects/pywin32/files/pywin32/ 6 、下载twisted的wheel文件:http://www.lfd.uci.edu/~gohlke/pythonlibs/ 7 、执行pip3 install 下载目录\Twisted-17.9 .0 -cp36-cp36m-win_amd64.whl 8 、pip3 install scrapy 1 、pip3 install scrapy

三 命令行工具 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 scrapy -h scrapy <command> -h Global commands: startproject genspider settings runspider shell fetch view version Project-only commands: crawl check list edit parse bench https://docs.scrapy.org/en/latest/topics/commands.html

示范用法

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 scrapy startproject MyProject cd MyProject scrapy genspider baidu www.baidu.com scrapy settings --get XXX scrapy runspider baidu.py scrapy shell https://www.baidu.com response response.status response.body view(response) scrapy view https://www.taobao.com scrapy fetch --nolog --headers https://www.taobao.com scrapy version scrapy version -v scrapy crawl baidu scrapy check scrapy list scrapy parse http://quotes.toscrape.com/ --callback parse scrapy bench

四 项目结构以及爬虫应用简介 1 2 3 4 5 6 7 8 9 10 11 12 project_name/ scrapy.cfg project_name/ __init__.py items.py pipelines.py settings.py spiders/ __init__.py 爬虫1. py 爬虫2. py 爬虫3. py

文件说明:

scrapy.cfg 项目的主配置信息,用来部署scrapy时使用,爬虫相关的配置信息在settings.py文件中。 items.py 设置数据存储模板,用于结构化数据,如:Django的Modelpipelines 数据处理行为,如:一般结构化的数据持久化 settings.py 配置文件,如:递归的层数、并发数,延迟下载等。强调:配置文件的选项必须大写否则视为无效 **,正确写法USER_AGENT=‘xxxx’**spiders 爬虫目录,如:创建文件,编写爬虫规则 注意:一般创建爬虫文件时,以网站域名命名

默认只能在cmd中执行爬虫,如果想在pycharm中执行需要做

1 2 3 from scrapy.cmdline import executeexecute(['scrapy' , 'crawl' , 'xiaohua' ])

关于windows编码

1 2 import sys,ossys.stdout=io.TextIOWrapper(sys.stdout.buffer,encoding='gb18030' )

五 Spiders 1、介绍

1 2 3 ##1、Spiders是由一系列类(定义了一个网址或一组网址将被爬取)组成,具体包括如何执行爬取任务并且如何从页面中提取结构化的数据。 ##2、换句话说,Spiders是你为了一个特定的网址或一组网址自定义爬取和解析页面行为的地方

2、Spiders会循环做如下事情

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 第一个请求定义在start_requests()方法内默认从start_urls列表中获得url地址来生成Request请求,默认的回调函数是parse方法。回调函数在下载完成返回response时自动触发 返回值可以4 种: 包含解析数据的字典 Item对象 新的Request对象(新的Requests也需要指定一个回调函数) 或者是可迭代对象(包含Items或Request) 通常使用Scrapy自带的Selectors,但很明显你也可以使用Beutifulsoup,lxml或其他你爱用啥用啥。 通过Item Pipeline组件存到数据库:https://docs.scrapy.org/en/latest/topics/item-pipeline.html 或者导出到不同的文件(通过Feed exports:https://docs.scrapy.org/en/latest/topics/feed-exports.html

3、Spiders总共提供了五种类:

1 2 3 4 5 ##1、scrapy.spiders.Spider #scrapy.Spider等同于scrapy.spiders.Spider ##2、scrapy.spiders.CrawlSpider ##3、scrapy.spiders.XMLFeedSpider ##4、scrapy.spiders.CSVFeedSpider ##5、scrapy.spiders.SitemapSpider

4、导入使用

1 2 3 4 5 6 7 8 9 10 11 import scrapyfrom scrapy.spiders import Spider,CrawlSpider,XMLFeedSpider,CSVFeedSpider,SitemapSpiderclass AmazonSpider (scrapy.Spider): name = 'amazon' allowed_domains = ['www.amazon.cn' ] start_urls = ['http://www.amazon.cn/' ] def parse (self, response ): pass

5、class scrapy.spiders.Spider

这是最简单的spider类,任何其他的spider类都需要继承它(包含你自己定义的)。

该类不提供任何特殊的功能,它仅提供了一个默认的start_requests方法默认从start_urls中读取url地址发送requests请求,并且默认parse作为回调函数

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 class AmazonSpider (scrapy.Spider): name = 'amazon' allowed_domains = ['www.amazon.cn' ] start_urls = ['http://www.amazon.cn/' ] custom_settings = { 'BOT_NAME' : 'allen_Spider_Amazon' , 'REQUEST_HEADERS' : { 'Accept' : 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8' , 'Accept-Language' : 'en' , } } def parse (self, response ): pass

定制scrapy.spider属性与方法详解

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 定义爬虫名,scrapy会根据该值定位爬虫程序 所以它必须要有且必须唯一(In Python 2 this must be ASCII only.) 定义允许爬取的域名,如果OffsiteMiddleware启动(默认就启动), 那么不属于该列表的域名及其子域名都不允许爬取 如果爬取的网址为:https://www.example.com/1. html,那就添加'example.com' 到列表. 如果没有指定url,就从该列表中读取url来生成第一个请求 值为一个字典,定义一些配置信息,在运行爬虫程序时,这些配置会覆盖项目级别的配置 所以custom_settings必须被定义成一个类属性,由于settings会在类实例化前被加载 通过self.settings['配置项的名字' ]可以访问settings.py中的配置,如果自己定义了custom_settings还是以自己的为准 日志名默认为spider的名字 self.logger.debug('=============>%s' %self.settings['BOT_NAME' ]) 该属性必须被定义到类方法from_crawler中 You probably won’t need to override this directly because the default implementation acts as a proxy to the __init__() method, calling it with the given arguments args and named arguments kwargs. 该方法用来发起第一个Requests请求,且必须返回一个可迭代的对象。它在爬虫程序打开时就被Scrapy调用,Scrapy只调用它一次。 默认从start_urls里取出每个url来生成Request(url, dont_filter=True ) 如果你想要改变起始爬取的Requests,你就需要覆盖这个方法,例如你想要起始发送一个POST请求,如下 class MySpider (scrapy.Spider): name = 'myspider' def start_requests (self ): return [scrapy.FormRequest("http://www.example.com/login" , formdata={'user' : 'john' , 'pass' : 'secret' }, callback=self.logged_in)] def logged_in (self, response ): pass 这是默认的回调函数,所有的回调函数必须返回an iterable of Request and /or dicts or Item objects. Wrapper that sends a log message through the Spider’s logger, kept for backwards compatibility. For more information see Logging from Spiders. 爬虫程序结束时自动触发

去重规则:去除重复的url

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 去重规则应该多个爬虫共享的,但凡一个爬虫爬取了,其他都不要爬了,实现方式如下 1 、新增类属性visited=set () 2 、回调函数parse方法内:def parse (self, response ): if response.url in self.visited: return None ....... self.visited.add(response.url) def parse (self, response ): url=md5(response.request.url) if url in self.visited: return None ....... self.visited.add(url) 配置文件: DUPEFILTER_CLASS = 'scrapy.dupefilter.RFPDupeFilter' DUPEFILTER_DEBUG = False JOBDIR = "保存范文记录的日志路径,如:/root/" scrapy自带去重规则默认为RFPDupeFilter,只需要我们指定 Request(...,dont_filter=False ) ,如果dont_filter=True 则告诉Scrapy这个URL不参与去重。 我们也可以仿照RFPDupeFilter自定义去重规则, from scrapy.dupefilter import RFPDupeFilter,看源码,仿照BaseDupeFilterclass UrlFilter (object ): def __init__ (self ): self.visited = set () @classmethod def from_settings (cls, settings ): return cls() def request_seen (self, request ): if request.url in self.visited: return True self.visited.add(request.url) def open (self ): pass def close (self, reason ): pass def log (self, request, spider ): pass DUPEFILTER_CLASS = '项目名.dup.UrlFilter' from scrapy.core.scheduler import Scheduler见Scheduler下的enqueue_request方法:self.df.request_seen(request)

例子

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 import scrapyclass MySpider (scrapy.Spider): name = 'example.com' allowed_domains = ['example.com' ] start_urls = [ 'http://www.example.com/1.html' , 'http://www.example.com/2.html' , 'http://www.example.com/3.html' , ] def parse (self, response ): self.logger.info('A response from %s just arrived!' , response.url) import scrapyclass MySpider (scrapy.Spider): name = 'example.com' allowed_domains = ['example.com' ] start_urls = [ 'http://www.example.com/1.html' , 'http://www.example.com/2.html' , 'http://www.example.com/3.html' , ] def parse (self, response ): for h3 in response.xpath('//h3' ).extract(): yield {"title" : h3} for url in response.xpath('//a/@href' ).extract(): yield scrapy.Request(url, callback=self.parse) import scrapyfrom myproject.items import MyItemclass MySpider (scrapy.Spider): name = 'example.com' allowed_domains = ['example.com' ] def start_requests (self ): yield scrapy.Request('http://www.example.com/1.html' , self.parse) yield scrapy.Request('http://www.example.com/2.html' , self.parse) yield scrapy.Request('http://www.example.com/3.html' , self.parse) def parse (self, response ): for h3 in response.xpath('//h3' ).extract(): yield MyItem(title=h3) for url in response.xpath('//a/@href' ).extract(): yield scrapy.Request(url, callback=self.parse)

参数传递

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 我们可能需要在命令行为爬虫程序传递参数,比如传递初始的url,像这样 scrapy crawl myspider -a category=electronics import scrapyclass MySpider (scrapy.Spider): name = 'myspider' def __init__ (self, category=None , *args, **kwargs ): super (MySpider, self).__init__(*args, **kwargs) self.start_urls = ['http://www.example.com/categories/%s' % category]

6、其他通用Spiders:https://docs.scrapy.org/en/latest/topics/spiders.html#generic-spiders

六 Selectors 1 2 3 4 5 6 7 8 9 10 11 ##1 //与/ ##2 text ##3、extract与extract_first:从selector对象中解出内容 ##4、属性:xpath的属性加前缀@ ##4、嵌套查找 ##5、设置默认值 ##4、按照属性查找 ##5、按照属性模糊查找 ##6、正则表达式 ##7、xpath相对路径 ##8、带变量的xpath

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 response.selector.css() response.selector.xpath() 可简写为 response.css() response.xpath() response.xpath('//body/a/' ) response.css('div a::text' ) >>> response.xpath('//body/a' ) [] >>> response.xpath('//body//a' ) [<Selector xpath='//body//a' data='<a href="image1.html">Name: My image 1 <' >, <Selector xpath='//body//a' data='<a href="image2.html">Name: My image 2 <' >, <Selector xpath='//body//a' data='<a href=" image3.html">Name: My image 3 <' >, <Selector xpath='//body//a' data='<a href="image4.html">Name: My image 4 <' >, <Selector xpath='//body//a' data='<a href="image5.html">Name: My image 5 <' >]>>> response.xpath('//body//a/text()' )>>> response.css('body a::text' )>>> response.xpath('//div/a/text()' ).extract()['Name: My image 1 ' , 'Name: My image 2 ' , 'Name: My image 3 ' , 'Name: My image 4 ' , 'Name: My image 5 ' ] >>> response.css('div a::text' ).extract()['Name: My image 1 ' , 'Name: My image 2 ' , 'Name: My image 3 ' , 'Name: My image 4 ' , 'Name: My image 5 ' ] >>> response.xpath('//div/a/text()' ).extract_first()'Name: My image 1 ' >>> response.css('div a::text' ).extract_first()'Name: My image 1 ' >>> response.xpath('//div/a/@href' ).extract_first()'image1.html' >>> response.css('div a::attr(href)' ).extract_first()'image1.html' >>> response.xpath('//div' ).css('a' ).xpath('@href' ).extract_first()'image1.html' >>> response.xpath('//div[@id="xxx"]' ).extract_first(default="not found" )'not found' response.xpath('//div[@id="images"]/a[@href="image3.html"]/text()' ).extract() response.css('#images a[@href="image3.html"]/text()' ).extract() response.xpath('//a[contains(@href,"image")]/@href' ).extract() response.css('a[href*="image"]::attr(href)' ).extract() response.xpath('//a[contains(@href,"image")]/img/@src' ).extract() response.css('a[href*="imag"] img::attr(src)' ).extract() response.xpath('//*[@href="image1.html"]' ) response.css('*[href="image1.html"]' ) response.xpath('//a/text()' ).re(r'Name: (.*)' ) response.xpath('//a/text()' ).re_first(r'Name: (.*)' ) >>> res=response.xpath('//a[contains(@href,"3")]' )[0 ]>>> res.xpath('img' )[<Selector xpath='img' data='<img src="image3_thumb.jpg">' >] >>> res.xpath('./img' )[<Selector xpath='./img' data='<img src="image3_thumb.jpg">' >] >>> res.xpath('.//img' )[<Selector xpath='.//img' data='<img src="image3_thumb.jpg">' >] >>> res.xpath('//img' ) [<Selector xpath='//img' data='<img src="image1_thumb.jpg">' >, <Selector xpath='//img' data='<img src="image2_thumb.jpg">' >, <Selector xpath='//img' data='<img src="image3_thumb.jpg">' >, <Selector xpa th='//img' data='<img src="image4_thumb.jpg">' >, <Selector xpath='//img' data='<img src="image5_thumb.jpg">' >] >>> response.xpath('//div[@id=$xxx]/a/text()' ,xxx='images' ).extract_first()'Name: My image 1 ' >>> response.xpath('//div[count(a)=$yyy]/@id' ,yyy=5 ).extract_first() 'images'

https://docs.scrapy.org/en/latest/topics/selectors.html

七 Items https://docs.scrapy.org/en/latest/topics/items.html

八 Item Pipeline 自定义pipeline

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 二:示范 from scrapy.exceptions import DropItemclass CustomPipeline (object ): def __init__ (self,v ): self.value = v @classmethod def from_crawler (cls, crawler ): """ Scrapy会先通过getattr判断我们是否自定义了from_crawler,有则调它来完 成实例化 """ val = crawler.settings.getint('MMMM' ) return cls(val) def open_spider (self,spider ): """ 爬虫刚启动时执行一次 """ print ('000000' ) def close_spider (self,spider ): """ 爬虫关闭时执行一次 """ print ('111111' ) def process_item (self, item, spider ): return item

示范

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 HOST="127.0.0.1" PORT=27017 USER="root" PWD="123" DB="amazon" TABLE="goods" ITEM_PIPELINES = { 'Amazon.pipelines.CustomPipeline' : 200 , } class CustomPipeline (object ): def __init__ (self,host,port,user,pwd,db,table ): self.host=host self.port=port self.user=user self.pwd=pwd self.db=db self.table=table @classmethod def from_crawler (cls, crawler ): """ Scrapy会先通过getattr判断我们是否自定义了from_crawler,有则调它来完 成实例化 """ HOST = crawler.settings.get('HOST' ) PORT = crawler.settings.get('PORT' ) USER = crawler.settings.get('USER' ) PWD = crawler.settings.get('PWD' ) DB = crawler.settings.get('DB' ) TABLE = crawler.settings.get('TABLE' ) return cls(HOST,PORT,USER,PWD,DB,TABLE) def open_spider (self,spider ): """ 爬虫刚启动时执行一次 """ self.client = MongoClient('mongodb://%s:%s@%s:%s' %(self.user,self.pwd,self.host,self.port)) def close_spider (self,spider ): """ 爬虫关闭时执行一次 """ self.client.close() def process_item (self, item, spider ): self.client[self.db][self.table].save(dict (item))

https://docs.scrapy.org/en/latest/topics/item-pipeline.html

九 Dowloader Middeware 1 2 3 4 5 6 7 8 9 下载中间件的用途 1 、在process——request内,自定义下载,不用scrapy的下载 2 、对请求进行二次加工,比如 设置请求头 设置cookie 添加代理 scrapy自带的代理组件: from scrapy.downloadermiddlewares.httpproxy import HttpProxyMiddleware from urllib.request import getproxies

下载器中间件

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 class DownMiddleware1 (object ): def process_request (self, request, spider ): """ 请求需要被下载时,经过所有下载器中间件的process_request调用 :param request: :param spider: :return: None,继续后续中间件去下载; Response对象,停止process_request的执行,开始执行process_response Request对象,停止中间件的执行,将Request重新调度器 raise IgnoreRequest异常,停止process_request的执行,开始执行process_exception """ pass def process_response (self, request, response, spider ): """ spider处理完成,返回时调用 :param response: :param result: :param spider: :return: Response 对象:转交给其他中间件process_response Request 对象:停止中间件,request会被重新调度下载 raise IgnoreRequest 异常:调用Request.errback """ print ('response1' ) return response def process_exception (self, request, exception, spider ): """ 当下载处理器(download handler)或 process_request() (下载中间件)抛出异常 :param response: :param exception: :param spider: :return: None:继续交给后续中间件处理异常; Response对象:停止后续process_exception方法 Request对象:停止中间件,request将会被重新调用下载 """ return None

配置代理

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 import requestsdef get_proxy (): return requests.get("http://127.0.0.1:5010/get/" ).text def delete_proxy (proxy ): requests.get("http://127.0.0.1:5010/delete/?proxy={}" .format (proxy)) from Amazon.proxy_handle import get_proxy,delete_proxyclass DownMiddleware1 (object ): def process_request (self, request, spider ): """ 请求需要被下载时,经过所有下载器中间件的process_request调用 :param request: :param spider: :return: None,继续后续中间件去下载; Response对象,停止process_request的执行,开始执行process_response Request对象,停止中间件的执行,将Request重新调度器 raise IgnoreRequest异常,停止process_request的执行,开始执行process_exception """ proxy="http://" + get_proxy() request.meta['download_timeout' ]=20 request.meta["proxy" ] = proxy print ('为%s 添加代理%s ' % (request.url, proxy),end='' ) print ('元数据为' ,request.meta) def process_response (self, request, response, spider ): """ spider处理完成,返回时调用 :param response: :param result: :param spider: :return: Response 对象:转交给其他中间件process_response Request 对象:停止中间件,request会被重新调度下载 raise IgnoreRequest 异常:调用Request.errback """ print ('返回状态吗' ,response.status) return response def process_exception (self, request, exception, spider ): """ 当下载处理器(download handler)或 process_request() (下载中间件)抛出异常 :param response: :param exception: :param spider: :return: None:继续交给后续中间件处理异常; Response对象:停止后续process_exception方法 Request对象:停止中间件,request将会被重新调用下载 """ print ('代理%s,访问%s出现异常:%s' %(request.meta['proxy' ],request.url,exception)) import time time.sleep(5 ) delete_proxy(request.meta['proxy' ].split("//" )[-1 ]) request.meta['proxy' ]='http://' +get_proxy() return request

十 Spider Middleware 1、爬虫中间件方法介绍

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 from scrapy import signalsclass SpiderMiddleware (object ): @classmethod def from_crawler (cls, crawler ): s = cls() crawler.signals.connect(s.spider_opened, signal=signals.spider_opened) return s def spider_opened (self, spider ): print ('我是allen派来的爬虫1: %s' % spider.name) def process_start_requests (self, start_requests, spider ): print ('start_requests1' ) for r in start_requests: yield r def process_spider_input (self, response, spider ): print ("input1" ) return None def process_spider_output (self, response, result, spider ): print ('output1' ) return result def process_spider_exception (self, response, exception, spider ): print ('exception1' )

2、当前爬虫启动时以及初始请求产生时

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 ''' 打开注释: SPIDER_MIDDLEWARES = { 'Baidu.middlewares.SpiderMiddleware1': 200, 'Baidu.middlewares.SpiderMiddleware2': 300, 'Baidu.middlewares.SpiderMiddleware3': 400, } ''' from scrapy import signalsclass SpiderMiddleware1 (object ): @classmethod def from_crawler (cls, crawler ): s = cls() crawler.signals.connect(s.spider_opened, signal=signals.spider_opened) return s def spider_opened (self, spider ): print ('我是allen派来的爬虫1: %s' % spider.name) def process_start_requests (self, start_requests, spider ): print ('start_requests1' ) for r in start_requests: yield r class SpiderMiddleware2 (object ): @classmethod def from_crawler (cls, crawler ): s = cls() crawler.signals.connect(s.spider_opened, signal=signals.spider_opened) return s def spider_opened (self, spider ): print ('我是allen派来的爬虫2: %s' % spider.name) def process_start_requests (self, start_requests, spider ): print ('start_requests2' ) for r in start_requests: yield r class SpiderMiddleware3 (object ): @classmethod def from_crawler (cls, crawler ): s = cls() crawler.signals.connect(s.spider_opened, signal=signals.spider_opened) return s def spider_opened (self, spider ): print ('我是allen派来的爬虫3: %s' % spider.name) def process_start_requests (self, start_requests, spider ): print ('start_requests3' ) for r in start_requests: yield r 我是allen派来的爬虫1 : baidu 我是allen派来的爬虫2 : baidu 我是allen派来的爬虫3 : baidu start_requests1 start_requests2 start_requests3

3、process_spider_input返回None时

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 SPIDER_MIDDLEWARES = { 'Baidu.middlewares.SpiderMiddleware1' : 200 , 'Baidu.middlewares.SpiderMiddleware2' : 300 , 'Baidu.middlewares.SpiderMiddleware3' : 400 , } ''' ##步骤二:middlewares.py from scrapy import signals class SpiderMiddleware1(object): def process_spider_input(self, response, spider): print("input1") def process_spider_output(self, response, result, spider): print('output1') return result def process_spider_exception(self, response, exception, spider): print('exception1') class SpiderMiddleware2(object): def process_spider_input(self, response, spider): print("input2") return None def process_spider_output(self, response, result, spider): print('output2') return result def process_spider_exception(self, response, exception, spider): print('exception2') class SpiderMiddleware3(object): def process_spider_input(self, response, spider): print("input3") return None def process_spider_output(self, response, result, spider): print('output3') return result def process_spider_exception(self, response, exception, spider): print('exception3') ##步骤三:运行结果分析 ##1、返回response时,依次经过爬虫中间件1,2,3 input1 input2 input3 ##2、spider处理完毕后,依次经过爬虫中间件3,2,1 output3 output2 output1

4、process_spider_input抛出异常时

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 ''' 打开注释: SPIDER_MIDDLEWARES = { 'Baidu.middlewares.SpiderMiddleware1': 200, 'Baidu.middlewares.SpiderMiddleware2': 300, 'Baidu.middlewares.SpiderMiddleware3': 400, } ''' from scrapy import signalsclass SpiderMiddleware1 (object ): def process_spider_input (self, response, spider ): print ("input1" ) def process_spider_output (self, response, result, spider ): print ('output1' ) return result def process_spider_exception (self, response, exception, spider ): print ('exception1' ) class SpiderMiddleware2 (object ): def process_spider_input (self, response, spider ): print ("input2" ) raise Type def process_spider_output (self, response, result, spider ): print ('output2' ) return result def process_spider_exception (self, response, exception, spider ): print ('exception2' ) class SpiderMiddleware3 (object ): def process_spider_input (self, response, spider ): print ("input3" ) return None def process_spider_output (self, response, result, spider ): print ('output3' ) return result def process_spider_exception (self, response, exception, spider ): print ('exception3' ) input1 input2 exception3 exception2 exception1

5、指定errback

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 import scrapyclass BaiduSpider (scrapy.Spider): name = 'baidu' allowed_domains = ['www.baidu.com' ] start_urls = ['http://www.baidu.com/' ] def start_requests (self ): yield scrapy.Request(url='http://www.baidu.com/' , callback=self.parse, errback=self.parse_err, ) def parse (self, response ): pass def parse_err (self,res ): return [1 ,2 ,3 ,4 ,5 ] ''' 打开注释: SPIDER_MIDDLEWARES = { 'Baidu.middlewares.SpiderMiddleware1': 200, 'Baidu.middlewares.SpiderMiddleware2': 300, 'Baidu.middlewares.SpiderMiddleware3': 400, } ''' from scrapy import signalsclass SpiderMiddleware1 (object ): def process_spider_input (self, response, spider ): print ("input1" ) def process_spider_output (self, response, result, spider ): print ('output1' ,list (result)) return result def process_spider_exception (self, response, exception, spider ): print ('exception1' ) class SpiderMiddleware2 (object ): def process_spider_input (self, response, spider ): print ("input2" ) raise TypeError('input2 抛出异常' ) def process_spider_output (self, response, result, spider ): print ('output2' ,list (result)) return result def process_spider_exception (self, response, exception, spider ): print ('exception2' ) class SpiderMiddleware3 (object ): def process_spider_input (self, response, spider ): print ("input3" ) return None def process_spider_output (self, response, result, spider ): print ('output3' ,list (result)) return result def process_spider_exception (self, response, exception, spider ): print ('exception3' ) input1 input2 output3 [1 , 2 , 3 , 4 , 5 ] output2 [] output1 []

十一 自定义扩展 1 2 3 自定义扩展(与django的信号类似) 1、django的信号是django是预留的扩展,信号一旦被触发,相应的功能就会执行 2、scrapy自定义扩展的好处是可以在任意我们想要的位置添加功能,而其他组件中提供的功能只能在规定的位置执行

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 from scrapy import signalsclass MyExtension (object ): def __init__ (self, value ): self.value = value @classmethod def from_crawler (cls, crawler ): val = crawler.settings.getint('MMMM' ) obj = cls(val) crawler.signals.connect(obj.spider_opened, signal=signals.spider_opened) crawler.signals.connect(obj.spider_closed, signal=signals.spider_closed) return obj def spider_opened (self, spider ): print ('=============>open' ) def spider_closed (self, spider ): print ('=============>close' ) EXTENSIONS = { "Amazon.extentions.MyExtension" :200 }

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 BOT_NAME = 'Amazon' SPIDER_MODULES = ['Amazon.spiders' ] NEWSPIDER_MODULE = 'Amazon.spiders' ROBOTSTXT_OBEY = False from scrapy.contrib.throttle import AutoThrottle 设置目标: 1 、比使用默认的下载延迟对站点更好2 、自动调整scrapy到最佳的爬取速度,所以用户无需自己调整下载延迟到最佳状态。用户只需要定义允许最大并发的请求,剩下的事情由该扩展组件自动完成在Scrapy中,下载延迟是通过计算建立TCP连接到接收到HTTP包头(header)之间的时间来测量的。 注意,由于Scrapy可能在忙着处理spider的回调函数或者无法下载,因此在合作的多任务环境下准确测量这些延迟是十分苦难的。 不过,这些延迟仍然是对Scrapy(甚至是服务器)繁忙程度的合理测量,而这扩展就是以此为前提进行编写的。 自动限速算法基于以下规则调整下载延迟 AUTOTHROTTLE_ENABLED = True AUTOTHROTTLE_START_DELAY = 5 DOWNLOAD_DELAY = 3 AUTOTHROTTLE_MAX_DELAY = 10 AUTOTHROTTLE_TARGET_CONCURRENCY = 16.0 AUTOTHROTTLE_DEBUG = True CONCURRENT_REQUESTS_PER_DOMAIN = 16 CONCURRENT_REQUESTS_PER_IP = 16 DOWNLOADER_MIDDLEWARES = { } ITEM_PIPELINES = { } """ 1. 启用缓存 目的用于将已经发送的请求或相应缓存下来,以便以后使用 from scrapy.downloadermiddlewares.httpcache import HttpCacheMiddleware from scrapy.extensions.httpcache import DummyPolicy from scrapy.extensions.httpcache import FilesystemCacheStorage """ REACTOR_THREADPOOL_MAXSIZE = 10 http://twistedmatrix.com/documents/10.1 .0 /core/howto/threading.html twisted调整线程池大小: from twisted.internet import reactorreactor.suggestThreadPoolSize(30 ) D:\python3.6 \Lib\site-packages\scrapy\crawler.py windows下查看进程内线程数的工具: https://docs.microsoft.com/zh-cn/sysinternals/downloads/pslist 或 https://pan.baidu.com/s/1jJ0pMaM 命令为: pslist |findstr python linux下:top -p 进程id D:\python3.6 \Lib\site-packages\scrapy\settings\default_settings.py

十三 爬取亚马逊商品信息 链接:https://pan.baidu.com/s/1eSWBDh4